As AI transforms diagnostics, treatment planning, and patient monitoring in clinics worldwide, one pressing question haunts every doctor and healthcare administrator: when the algorithm errs, who stands in the courtroom? This in-depth analysis equips physicians and clinic owners with actionable legal security strategies amid rapidly evolving regulations and real-world risks.

In the precision-driven world of modern medicine, artificial intelligence promises faster diagnoses, personalized treatments, and streamlined operations. Yet beneath this transformative potential lies a critical vulnerability: when the machine fails, who is legally responsible?

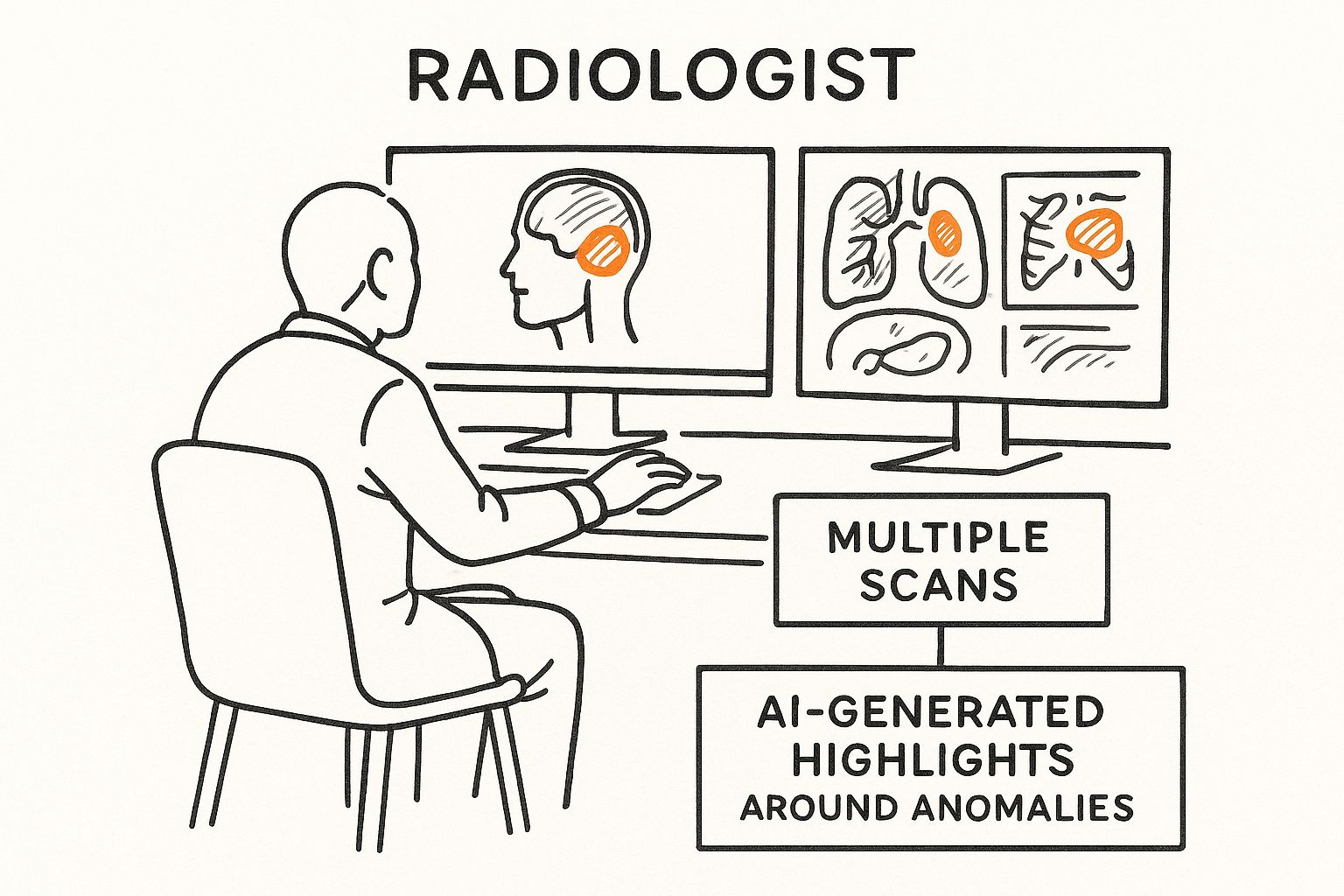

Picture this: An AI-powered imaging tool misses a subtle early-stage malignancy. The physician, trusting the algorithm’s 98% accuracy rating, proceeds with a conservative plan. Months later, the patient’s condition worsens dramatically. A malpractice suit follows. The radiologist, the hospital system, the AI developer, or the software vendor—who bears the brunt?

For doctors and clinic owners, this is not hypothetical. It is the new frontier of legal security in healthcare. Regulatory frameworks are struggling to keep pace, and liability doctrines remain fragmented. This article examines the current landscape, real-world implications, and proven strategies to protect your practice, your patients, and your professional future.

The Evolving Regulatory Landscape: FDA, EU AI Act, and Global Standards

Healthcare AI operates in a regulatory gray zone that is rapidly clarifying—yet still leaves significant exposure for providers. In the United States, the FDA classifies many AI/ML-enabled devices as Software as a Medical Device (SaMD). While premarket pathways like 510(k) or De Novo exist, these do not automatically shield manufacturers or users from liability when harm occurs.

Across the Atlantic, the EU AI Act (effective 2024, with high-risk provisions phasing in by 2026–2027) designates medical AI systems as “high-risk.” It mandates rigorous risk management, data governance, human oversight, and transparency. The updated Product Liability Directive further shifts burden toward developers and economic operators, recognizing software as a “product” capable of causing physical harm.

Key takeaway for clinics: Compliance is no longer optional. Failure to implement required human oversight or bias audits can be used as evidence of negligence in court.

“Creators of autonomous AI should assume liability for harms when the device is used properly and on-label and obtain medical malpractice insurance.” — Cestonaro et al., Defining medical liability when artificial intelligence is applied (2023)

This regulatory momentum underscores a vital truth: legal security begins with proactive alignment, not reactive defense.

Real-World Scenarios: Assigning Blame When AI Errs

Although direct AI-malpractice verdicts remain rare, emerging cases and hypotheticals reveal troubling patterns. Radiologists using AI-assisted detection tools have reported increased plaintiff success rates when the AI flags an abnormality the physician misses—suggesting juries may view AI disagreement as stronger evidence of negligence.

In the U.S., traditional malpractice principles still apply: physicians must exercise reasonable care. Blind reliance on AI without independent verification can breach the standard of care. Hospitals may face vicarious liability, while developers risk product-liability claims for design defects, biased training data, or inadequate warnings.

Internationally, the EU framework pushes accountability upstream to manufacturers, yet deployers (hospitals and clinicians) retain duties of oversight and informed consent. One European analysis warns: unclear allocation of responsibility among manufacturers, providers, and clinicians creates a “harm complex” that could overwhelm practices unprepared for litigation.

“The law of medical negligence is all about what the reasonable person would do. And so, by adopting basic tenets of responsible use of AI, I think it’s fair to say we can protect physicians fairly well from liability.” — Stanford HAI expert analysis (2024)

These scenarios highlight that AI does not replace judgment—it amplifies the need for it. Clinic owners who treat AI as a “black box” invite unnecessary legal exposure.

Proactive Legal Security Measures: Protecting Your Clinic and Career

Legal security is not about avoiding AI—it is about deploying it responsibly. Forward-thinking physicians and administrators are implementing these battle-tested safeguards:

- Maintain Human Oversight: Always perform independent clinical review. Document your reasoning when overriding or confirming AI outputs.

- Secure Robust Contracts and Insurance: Negotiate clear indemnification clauses with AI vendors. Ensure malpractice policies explicitly cover AI-augmented care.

- Prioritize Informed Consent: Disclose AI use to patients and explain its limitations—transparency reduces litigation risk.

- Conduct Regular Audits and Training: Implement bias detection, performance monitoring, and staff education aligned with FDA and EU requirements.

- Leverage Predetermined Change Control Plans (PCCP): For adaptive AI, maintain FDA-compliant protocols for algorithm updates.

“AI integration in healthcare is outpacing legal frameworks, creating liability challenges for physicians, health systems, and manufacturers.” — Medical Economics (2025)

By embedding these practices, clinics transform potential vulnerability into a competitive advantage: safer care, stronger defense, and greater peace of mind.

Conclusion

The question “Who is responsible when the machine fails?” has no single answer—yet. What is certain is that doctors and clinic owners who treat legal security as a core operational priority will thrive in the AI era. Regulatory clarity is coming. Technological capability is accelerating. The professionals who combine both with disciplined human judgment will not only minimize liability—they will lead the next generation of ethical, patient-centered care.

The future of medicine is collaborative: human expertise augmented by artificial intelligence, protected by proactive legal strategies. Your clinic’s resilience starts today—with informed decisions, documented processes, and an unwavering commitment to both innovation and accountability.

Ready to strengthen your practice’s legal posture in the age of AI? Consult your risk-management advisor and review vendor contracts this quarter. The machine may suggest, but only you decide—and only you can secure the outcome.